Machine learning may be neck and neck with “big data” in the race to being Hollywood’s favorite catch-all term for technology as magic. Like big data, such outsider interest is driven by real successes of the approach. “We didn’t know how to get a car to drive itself 10 years ago. Now we are very close,” says Thibaut Lamadon, an assistant professor of economics at the University of Chicago. Netflix recommendations, AI assistants in our homes, and even our image searches for cat pictures have been invisibly improved by machine learning, a branch of computer science focused on giving computers the ability to recognize patterns and to learn and adapt to given contexts without being explicitly programmed for them. “Machine learning really has had undeniable success,” says Lamadon. “And this is thanks to the fact that people have developed those particular set of statistical methods that have been branded as machine learning.”

The question that Lamadon and his colleagues want to answer with a September 2016 conference: can economists embrace the method and its potential to parse complex datasets while being satisfied that the inner workings of such models hold up to their expected level of statistical accuracy? Major successes for applications of machine learning offer promise, and rigorously understanding its potential is a next step in incorporating it in their own work.

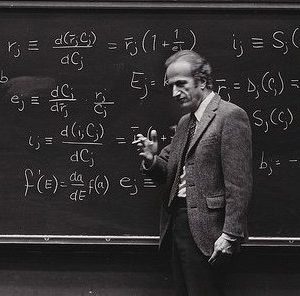

To that end, Stephane Bonhomme, Lars Peter Hansen and John Lafferty, along with Lamadon, have worked to gather economists, statisticians and computer scientists to discuss what machine learning is and how it might be used to consider economic problems. Key to that question: what are the successes machine learning has had thus far? And what questions should economists think through before embracing machine learning in their own methods of problem solving?

In the context of economics, what is machine learning? Not magic, but new methods— powerful nonparametric statistics, to be specific. “The big change will be that, in practice, it may be much more efficient to use,” says Lamadon. Nonparametric statistics play an important role in allowing economists to establish causal inference in their models, despite many parameters that can make it tricky to link cause to effect. “Nonparametrics minimize the assumptions we are making when trying to infer the effect of a social policy, for example, on certain outcomes,” writes Stephane Bonhomme. “Machine learning potentially offers appealing alternative tools, in addition to kernel or series methods which are known to economists.”

Computational scholars have already brought these techniques to bear in other academic disciplines, including biology and physics. One such computer scientist, Risi Kondor, has helped apply machine learning to understanding the complexities of molecular dynamics, and his work has also been applied to biological problems, such as understanding gene regulatory networks. The “inherently interdisciplinary” spirit of machine learning has driven those working with the technique to reach out and work within a wide range of disciplines, writes Kondor. “The whole point of machine learning is to have an impact on other fields and to lead to real world applications. So, by definition, if machine learning makes a contribution to, say, economics, then that is a huge success for us.”

Economists can offer a unique challenge to these eager statisticians and computer scientist collaborators—complex, policy-relevant data on the major market forces that shape our daily lives. “That data richness provides flexible ways to make predictions about individual and market behavior, including assessment of credit risk when taking out loans and implications for markets of consumer goods including housing,” says Institute Director Lars Hansen. Reaching out beyond economics for fresh thinking on the academic challenges involved in investigating that data is key for the future of the discipline, and a key reason for September’s conference.

Some of the work to be done is fundamental—orienting economists to the ways that machine learning models function and what sorts of prior assumptions go into them. “Unfortunately these methods rarely come with a user guide for economists,” says Bonhomme. But more uniquely to economics—particularly econometricians—scholars need to understand the method’s inner workings.

Economists care as much about what’s inside of a model as what goes in and comes out. They tend to be very careful to generate counterfactuals: alternative explanations to the causal claims within their models. “When we write economic papers, we’re very careful about laying down the assumptions that are going to pin down the counterfactual,“ says Lamadon. Those assumptions are not always as clear in machine learning models, and economists need to understand how to look at a machine learning model and the computational design assumptions that would shape its counterfactuals, including the statistical properties that lie within an otherwise “black box” of a modeling method.

Economists bring a healthy level of skepticism to the table with them, but they understand that this collaboration with computer scientists and statisticians is key to more richly parsing the questions that occupy their time.

Hansen writes that “while the data may be incredibly rich along some dimensions, many policy-relevant questions require the ability to transport this richness into other hypothetical settings as is often required when we wish to know likely responses to new policies or changes in the underlying economic environment.”

“By organizing conferences like the September 23–24, 2016 event, the Becker Friedman Institute is nurturing a crucial next step of how best to integrate formal economic analysis to addressing key policy questions. We seek to further the communication among a variety of scholars from computer science, statistics and economics in addressing new research challenges.”

—Mark Riechers